Access Reviews are Broken: Why Rubber Stamping Happens (and What Agentic UARs Change)

Access reviews are meant to enforce least privilege, but at scale they often become rubber stamping: too many entitlements, too little context, and too much perceived risk in removing access. This article explains why it happens and what agentic UARs can change with better evidence, smarter prioritization, and audit-ready rationale.

Access Reviews Are Broken: Why Rubber Stamping Happens (and What Agentic UARs Change)

Access reviews were built to enforce least privilege. At enterprise scale, many programs devolve into rubber stamping: reviewers approve nearly everything, not because leaders don’t care, but because the process is structurally optimized for throughput over decision quality.

The root causes are predictable:

- Too many entitlements per reviewer (sheer volume)

- Too little context per line item (why it exists, what it enables, whether it’s still needed)

- Too much perceived downside to removing access (disruption, escalations, broken workflows)

AI is moving into the access review workflow, raising the bar for what “modern” reviews should deliver: higher-quality decisions, faster cycles, and audit-ready rationale, without weakening human accountability.

The key question isn’t “Should we use AI?” It’s:

Does the approach measurably increase decision quality and auditability while preserving human accountability and permission boundaries?

TL;DR

- Rubber stamping is predictable when humans must make high-volume decisions with low context and high disruption risk.

- Reminders and escalations improve completion, not correctness.

- “Agentic UARs” can help only if they deliver signal upgrades: better evidence, smarter prioritization, and traceable rationale.

- The winning model is controlled autonomy: bounded actions where policy permits, with humans owning high-impact decisions.

- Evaluate any approach on data provenance, enforced policy constraints, audit outputs, integration, and rollback/exception handling.

What “good” looks like (measurable success criteria)

A modern access review program should move measurable needles:

- Fewer high-risk entitlements per user (privileged + sensitive)

- Higher revoke/downgrade rates where evidence supports it (not blanket approvals)

- Shorter review cycle time for high-risk items

- Audit packages that show evidence + rationale per decision (reproducible months later)

If the workflow only improves completion rates, it’s not fixing rubber stamping.

The predictable failure mode: what “rubber stamping” really is

Rubber stamping isn’t laziness. It’s what happens when humans are asked to make thousands of binary decisions with:

- Too many line items (applications, groups, roles, entitlements, exceptions)

- Too little decision context (why access was granted, whether it’s still used, what data it touches)

- Too much fear of disruption (removing access might break work, anger stakeholders, or trigger escalations)

When the marginal cost of a “deny” is high, and the reviewer can’t see evidence that makes a “deny” feel safe, approval becomes the default.

Why policy doesn’t fix execution

Organizations often respond to rubber stamping with more policy:

- More reminders and escalations

- Tighter deadlines

- Higher-level approvals

- More frequent campaigns

These levers can improve completion rates. They do not create decision signal.

If the review still lacks high-quality evidence and clear prioritization, escalating the workflow just moves the queue faster. You get more reviews done, not better decisions made.

What “Agentic UARs” actually are

Agentic User Access Reviews (Agentic UARs) are access-review workflows where AI assembles evidence and produces recommendations (approve/deny/modify/time-bound), and may trigger bounded follow-up actions (tickets, outreach, workflow steps) within defined policy and permission limits.

Not the same as “black-box auto-revocation.” The point is higher-quality decisions at scale with clear human accountability.

Two requirements separate “agentic” from “assistive UI”:

- Controlled: the system operates inside explicit permission boundaries

- Auditable: the recommendation is traceable to evidence and policy

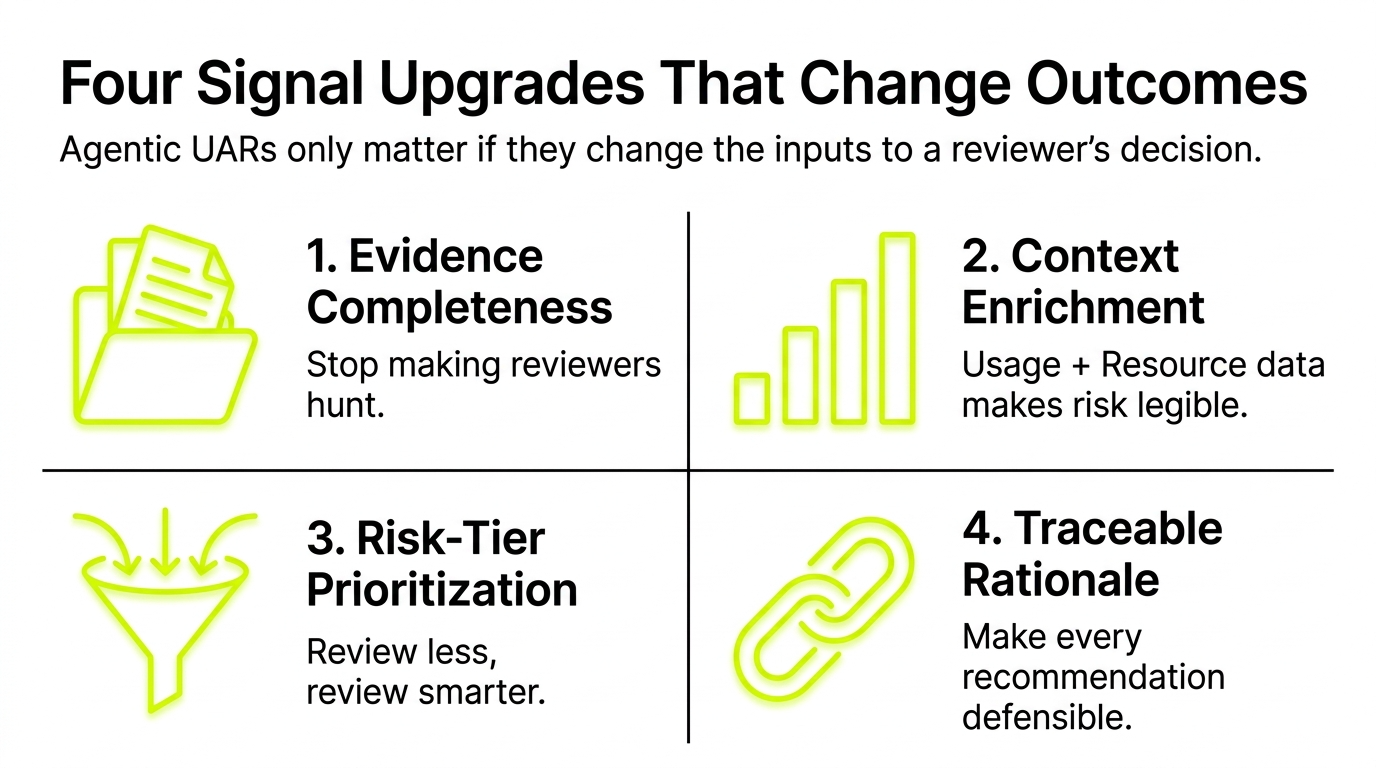

What changes outcomes: four “signal upgrades” that reduce rubber stamping

What’s changing isn’t that reviews are “AI-powered.” It’s that leading approaches are upgrading the decision inputs: entitlement context, usage signals, resource sensitivity, and a traceable explanation for every recommendation.

Agentic UARs only matter if they change the inputs to a reviewer’s decision. The most effective improvements cluster into four signal upgrades.

1) Evidence completeness (stop making reviewers hunt)

A reviewer can’t make a defensible call if the record is incomplete. High-signal reviews assemble:

- Identity context: manager, department, role, location, employment type, join date

- Entitlement context: what the access grants (and to what systems/data)

- Grant context: why it was granted (request, project, exception, policy)

- Temporal context: how long it has existed, last reviewed, next review

If evidence is missing or inconsistent, the system should surface that explicitly, so reviewers aren’t falsely confident.

2) Usage + resource context enrichment (make the risk legible)

The biggest gap in classic UARs is that a reviewer sees a permission string, not the reality of what it enables.

High-signal enrichment can include:

- Last-access timestamps (recency) and usage patterns (frequency)

- Privilege tiering (admin vs. standard; write vs. read)

- Resource sensitivity (production vs. non-prod; regulated data; critical apps)

- Peer comparison (is this unusual for this role/team?)

The goal: turn “approve/deny” from a guess into a decision with evidence.

3) Risk-tier prioritization (review less, review smarter)

Most programs try to review everything equally, and drown.

A more effective model is risk-tiered review:

- Focus human attention on high-risk access: privileged roles, sensitive data, anomalous access, exceptions

- De-emphasize low-risk, policy-aligned entitlements

- Use sampling or lighter-touch attestations where appropriate

Agentic workflows can help by continuously identifying what should rise to the top, so your best reviewers spend time where the risk is.

4) Traceable rationale (make every recommendation defensible)

A recommendation without rationale is just another checkbox.

Better systems generate audit-ready rationale that answers:

- What evidence was used?

- What policy or rule was applied?

- What anomalies or risk signals were detected?

- What alternative actions were considered (remove, downgrade, time-bound, exception)?

This is where “agentic” can be valuable: not because it decides for you, but because it creates a repeatable, reviewable decision record.

Executive guardrails: trust model before automation

AI in access reviews is not “set and forget.” For most enterprises, the winning approach is controlled autonomy: improve outcomes by assembling higher-quality evidence and recommendations, and only allow bounded actions where policy permits, while preserving human ownership of decisions.

When you hear “agentic,” ask how the system enforces these guardrails:

Permission boundaries

- Does the system operate only with explicitly granted permissions?

- Can it recommend actions without being able to execute them?

- If it can execute, is it limited to safe, policy-approved steps (e.g., open a ticket, request confirmation, initiate workflow)?

Human accountability

- Who owns the final decision for high-impact access?

- Are escalation paths clear (and not just “send more emails”)?

- Is there explicit separation between recommendation and approval?

Separation of duties

- Can the system both propose and implement sensitive changes?

- Are there checks to prevent a single actor (human or system) from unilaterally changing high-risk access?

Auditability by design

- Can you reproduce “why this was recommended” months later?

- Does the rationale survive tool changes, reviewer turnover, and org restructuring?

- Can you show evidence provenance (where did each data point come from)?

Put differently: trust the system’s boundaries before you trust its automation.

How to evaluate “agentic UAR” approaches: 5 questions execs should ask

1) What data is the recommendation based on, and what’s the provenance?

- Which systems contribute evidence (IAM/IGA, HRIS, cloud platforms, SaaS audit logs, ticketing)?

- How does it handle missing, conflicting, or stale data?

- Can reviewers inspect the underlying evidence per recommendation?

2) What policy constraints are enforced (not just configured)?

- Are boundaries technically enforced or “best effort”?

- Are there hard stops for privileged access decisions?

- How are exceptions handled and tracked?

3) What does the audit output look like?

- Can it produce a rationale a third party would understand?

- Can you export evidence + decision records for audit and internal review?

- Does it support consistent outcome taxonomy (approve, remove, downgrade, time-bound, exception)?

4) How does it integrate with your existing IAM/IGA workflows?

- Does it complement existing certifications/access packages, or require replacing them?

- Can it write back decisions cleanly (and safely) into the system of record?

- Does it support multi-environment reality (prod/non-prod), mergers, and hybrid identity?

5) What are the rollback and exception-handling mechanics?

- If a removal breaks work, what’s the fastest path to restore safely?

- Can you time-bound access instead of permanently removing it?

- Can the system learn from “false positive” removals without becoming permissive?

If a solution can’t answer these clearly, it’s likely optimizing for review completion rather than review correctness.

The bottom line

Access reviews don’t fail because leaders don’t care. They fail because the traditional process forces humans to make high-volume decisions with low-quality evidence, and punishes them when they get it wrong.

Agentic UARs can help, but only when they deliver signal upgrades (better evidence, smarter prioritization, traceable rationale) within a model of controlled autonomy that preserves human accountability and enforces permission boundaries.

When that’s true, “agentic” isn’t a buzzword, it’s a path to access reviews that are faster and defensible.