Why IAM Completeness and Accuracy Keeps Failing at Financial Institutions

For financial institutions, IAM completeness and accuracy isn't primarily an audit problem. It is an operational execution problem that compounds until something changes about how the work gets done.

TL;DR

- IAM completeness and accuracy programs at large financial institutions don't fail from a lack of tooling. They fail because the operational work required to fix what the tools surface never gets done at scale.

- Every 90 days, the same backlog returns: orphaned accounts, missing ownership records, NHIs with no expiration date, and access certifications that no reviewer can meaningfully validate.

- Agentic AI doesn't close this gap by automating decisions. It closes it by executing the remediation work your team has always known needs to happen but has never had the capacity to finish.

- Alex, Twine's AI Digital Employee for IAM, takes ownership of the communication, follow-up, evidence collection, and data remediation work so C&A holds up under audit without burning through your team's capacity to get there.

Every quarter, the same report lands. Accounts with no owner. Entitlements with no business justification on file. HR records that don't match what's in the directory. NHIs that have been running for two years with no expiration date and no one who can explain what they do. The IAM team knows what needs to be fixed. They've known for months.

But the C&A cycle doesn't start with the data cleanup. It starts with a follow-up email to an application owner who hasn't responded. Then another. Then a calendar invite to get ten minutes on their schedule. Then an escalation to their manager because the review deadline is three days out and nothing has come back. Someone is reconciling the access review population against HR. There are accounts that don't match. There are systems that weren't included at all.

The problem isn't visibility. It's everything that happens after visibility: the follow-up loop, the communication overhead, the evidence assembly, the thousands of individual data corrections across systems that don't talk to each other, owned by teams who have other priorities. That is the completeness and accuracy problem in financial services IAM. None of it improves until something changes about how the operational work gets done.

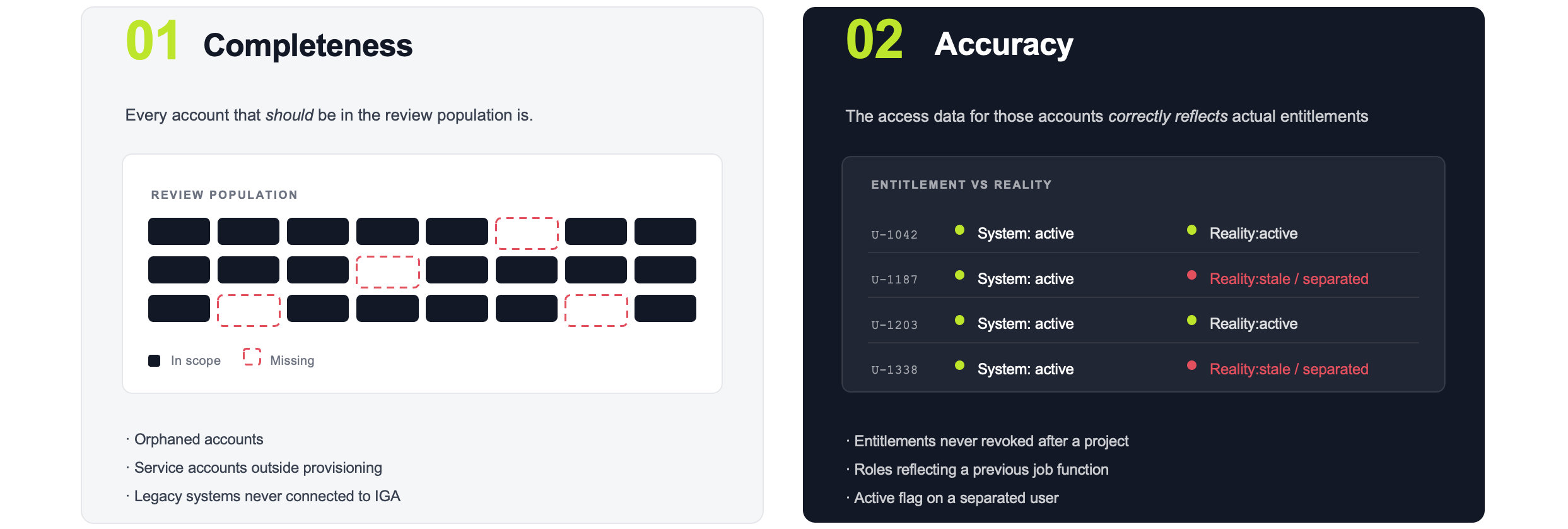

What Completeness and Accuracy Mean in IAM Audits: A Precise Definition

In IAM: completeness means every account that should be included in an access review population was included. Accuracy means the access data for those accounts correctly reflects actual entitlements at the time of review. Both are standard ITGC test points under SOX and similar frameworks.

Both are testable. Both are commonly found deficient. And both are often treated as audit findings when they are, in fact, operational failures.

A completeness gap means accounts fell out of the review population entirely: an orphaned account, a service account added outside normal provisioning, a legacy application or disconnected system that was never connected to the IGA platform. The review happened, but it did not cover everything it should have.

An accuracy gap means the data about accounts was wrong. An entitlement approved six months ago for a temporary project that was never revoked. A role assignment reflecting a previous job function because a mover event was never fully processed. A user marked as active who separated four weeks ago.

Neither is primarily an audit problem. Both are operational problems that become visible in audits, and both are made significantly worse by the labor that surrounds them.

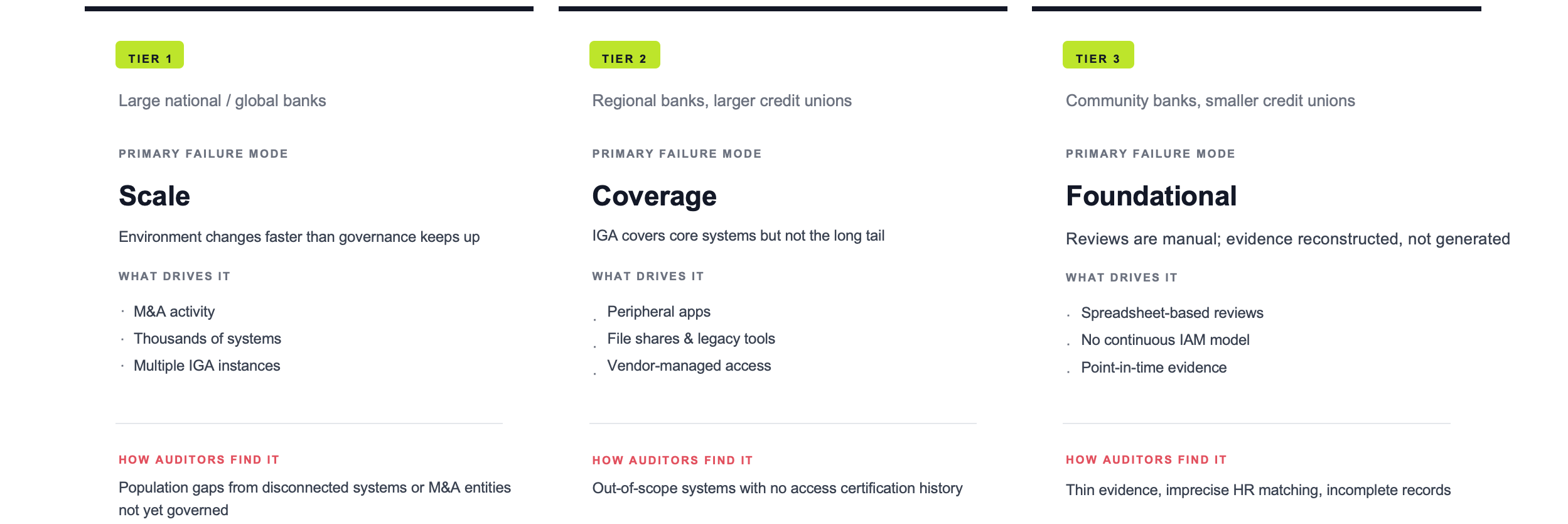

How the C&A Problem Differs Across Tier 1, Tier 2, and Tier 3 Financial Institutions

The failure modes are not uniform. Where the gaps concentrate, and what it costs to close them, depends on the size and maturity of the institution.

The tiers look different on the surface. The underlying problem is the same: identity data accumulates errors, lifecycle events do not fully propagate, application owners do not respond, and certifiers ask questions that cost analyst time to answer. The gap between what the IAM system shows and what is actually true grows until something forces a cleanup.

Why C&A Keeps Recurring: The Data Gap Is Not the Only Problem

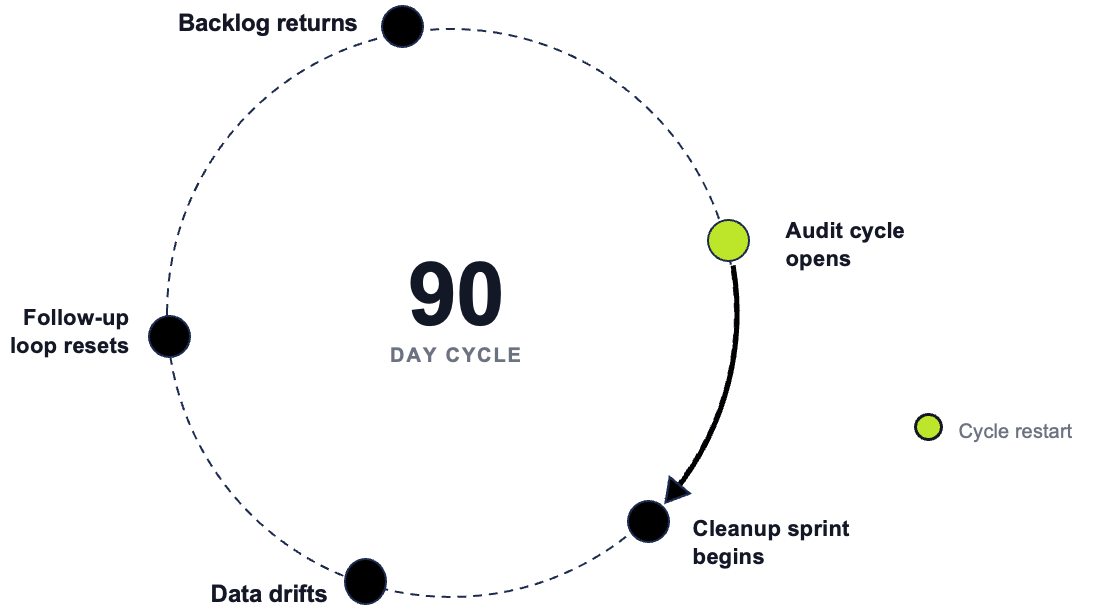

Most IAM teams understand the data drift problem. They run a cleanup sprint before each review, reconcile the population, patch the obvious mismatches, and get the review done on cleaner data. And then it happens again next quarter.

The data sprint is only one of three structural problems.

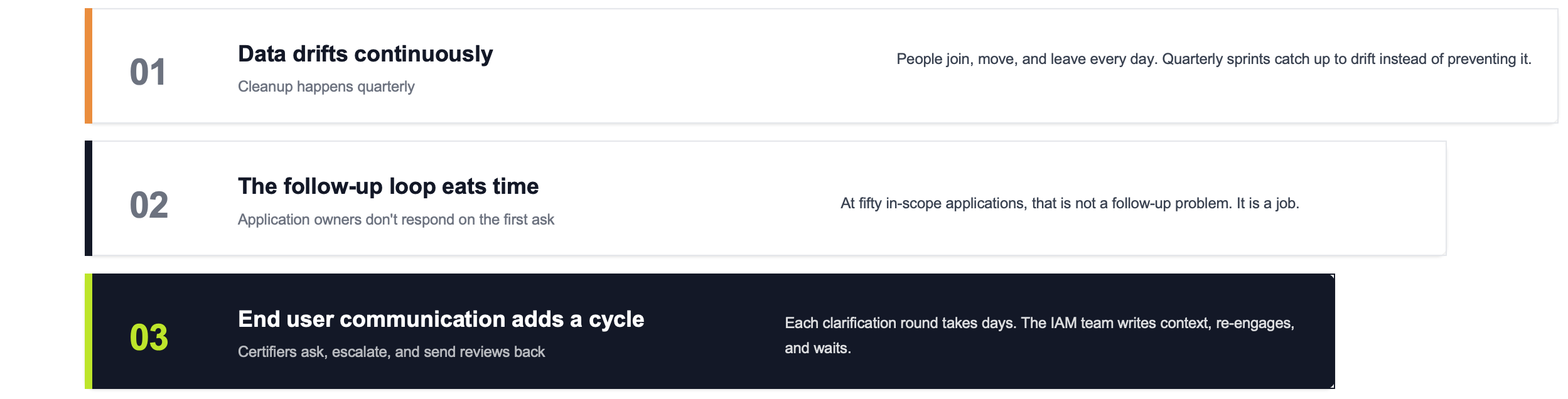

Data drifts continuously, but cleanup happens quarterly.

Identity data does not drift once a quarter. People join, move, and leave every day. Access gets granted for temporary reasons and stays. The quarterly sprint is catching up to accumulated drift rather than preventing it. Without a continuous mechanism, the same errors re-form between every cycle.

The follow-up loop eats time that should go elsewhere.

Application owners do not respond on the first ask. They often do not respond on the second or third. IAM analysts spend significant time in each review cycle chasing responses, re-sending requests, and escalating to managers. At fifty in-scope applications, that is not a follow-up problem. It is a job.

End user communication creates its own cycle.

Certifiers who do not understand what they are certifying do not simply approve or reject. They ask questions, escalate, and send the review back for clarification. Leadership certifying access they have never directly managed requires explanations. The IAM team writes context, re-engages, and waits. Each round takes days.

Institutions that address only the data side of C&A are fixing one of three problems. The follow-up loop and the communication overhead are structural contributors to why C&A never stays fixed, and they require their own operational solution.

How Agentic AI Takes Ownership of the Work That Makes C&A Hold Up Under Audit

The reason C&A keeps failing is not that teams lack the right strategy. It is that the operational work required to maintain it is too large for a human team to absorb continuously. The follow-up cycles, the end user communication, the evidence assembly, the data cleanup: these collectively consume capacity that is not available at the pace the work demands.

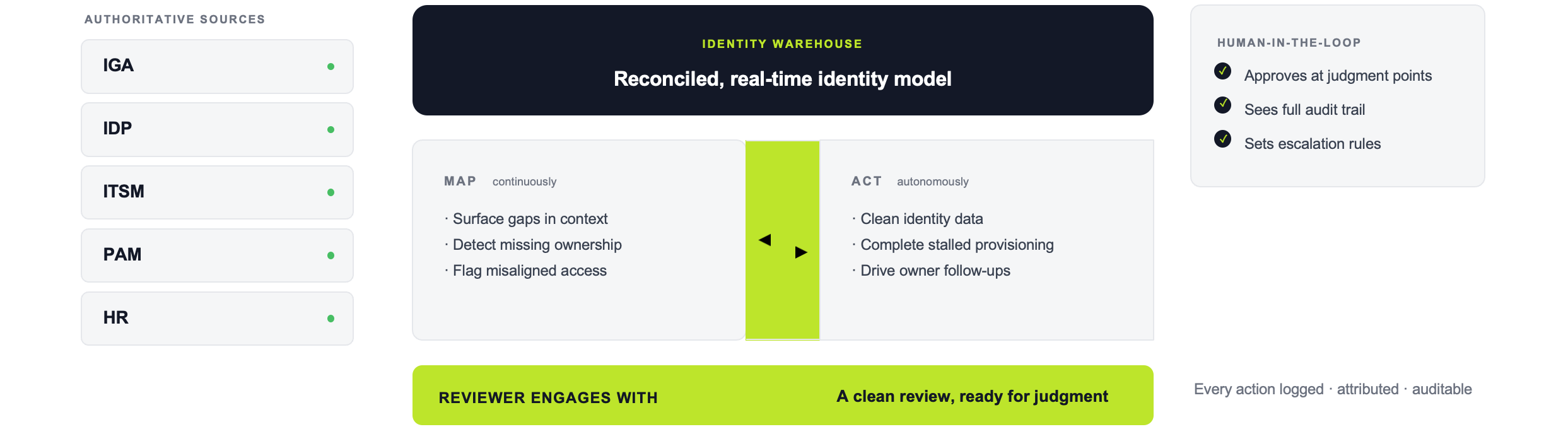

Alex, our first AI Digital Employee for IAM, is designed to take ownership of that work. Not to assist with it. To do it.

End user communication.

Alex communicates directly with application owners and certifiers through the tools they already use. It sends the initial outreach, follows up on non-responses, escalates through appropriate channels, and provides the context certifiers need to act without going back to the IAM team for an explanation. The human reviewer sees a clean review waiting for a decision, not an open thread that has been sitting for two weeks.

Heavy lifting before human review.

Alex kicks off certification processes on schedule, handles evidence collection, and completes population completeness checks before the human certification window opens. By the time a reviewer engages, Alex has already done three weeks of work. The reviewer applies judgment. Alex did everything that comes before it.

Active data remediation.

Alex operates on a continuous Map and Act loop. The Map phase connects to your IGA, IDP, ITSM, and PAM systems to generate a real-time model of your environment, surfacing gaps, missing ownership, and misaligned access in context. The Identity Warehouse at its core reconciles identities from all authoritative and complementary sources, so the access review population reflects a coherent view of your environment, not a patchwork of system exports. The Act phase deploys agents to clean identity data, fill in missing attribution, complete stalled provisioning loops, and flag stale entitlements for review.

Certification coverage.

Every in-scope account and entitlement is included: not just the systems connected to the IGA platform today, but the legacy applications, the disconnected tools, and the accounts that typically fall through the cracks. Coverage gaps are identified and addressed before they become audit findings.

Every action is logged, attributed, and auditable. Human approval is required at the points where judgment calls matter. This is not automation running unsupervised. It is an AI working under your control, with the same conservative escalation behavior you would want from a careful analyst.

The result: identity data quality improves continuously, the follow-up loop is handled without analyst involvement, certifiers arrive with what they need to act, and coverage gaps are closed before the review opens. C&A is maintained as a byproduct of operations, not assembled under deadline pressure.

What IAM Completeness and Accuracy Looks Like When It Is Working

The institutions that get C&A right are not the ones who run a better cleanup sprint each quarter. They are the ones who stopped treating it as an audit preparation task and started treating it as an operational standard: access review populations are complete and reconciled, certifiers have the context to act, application owners are reached without analyst follow-up, and evidence is generated as a byproduct of operations, not assembled under deadline pressure. That is what changes when the operational work is handled continuously rather than recovered from each cycle.

Frequently Asked Questions

What does completeness and accuracy mean in IAM audits?

In IAM audit contexts, completeness means every account that should be included in an access review was actually included in the review population. Accuracy means the access data for those accounts correctly reflected actual entitlements at the time of the review. Auditors test both as part of IT General Controls (ITGC) under SOX and similar frameworks. Finding deficiencies in either is a reportable control weakness.

Why do C&A findings keep recurring even after remediation?

Recurring C&A findings are almost always the result of three compounding problems: identity data that drifts continuously but is cleaned only quarterly, a follow-up loop with application owners that resets every review cycle, and end user communication overhead that slows certifications without improving their quality. Quarterly remediation addresses the data surface but not the operational labor underneath it. Without a continuous model addressing all three, the same problems re-form between every audit cycle.

What is the difference between a completeness gap and an accuracy gap in IAM?

A completeness gap means accounts or systems were missing from the review population entirely. Common causes include orphaned accounts, service accounts added outside normal provisioning, and legacy or disconnected applications not connected to the IGA platform. An accuracy gap means accounts were included but the access data about them was wrong, such as a role assignment that was never updated after a job change, or access that remained active after a separation.

How does an AI Digital Employee handle the follow-up and communication work in a user access review?

An AI Digital Employee like Alex handles the full communication lifecycle: initial outreach to application owners, follow-ups on non-responses, escalations through appropriate channels, and context-setting for certifiers who need to understand what they are certifying before they can act. This work happens before the human certification window opens, so reviewers engage with a process that is already moving. The IAM team applies judgment to decisions. Alex handles everything that comes before those decisions.

Does improving IAM data quality require replacing existing IGA tools?

No. The goal is to work on top of existing IGA, IDP, ITSM, and PAM infrastructure. The Identity Warehouse reconciles identities from all those authoritative sources rather than replacing them. For Tier 1 institutions this means extending continuous governance across a complex, multi-platform environment. For Tier 2 and Tier 3, it means bringing the long tail of unconnected systems and manual processes into a model that maintains data quality and review readiness automatically.